STOPPING THE PHISHING ATTACK – SMALL AND MEDIUM SIZED BUSINESSES (SMBs) OR ORGANIZATIONS

October 20, 2023

Mitigating Cisco IOS XE Web UI Vulnerabilities – CVE-2023-20198 and CVE-2023-20273

October 24, 2023Foreign Information Manipulation and Interference (FIMI) refers to a primarily non-illegal pattern of behavior that poses a threat or has the potential to negatively impact values, processes, and political procedures. Such activities are characterized by their manipulative nature and are executed intentionally and in a coordinated manner. Those responsible for these activities can be either state actors or non-state actors, including their proxies both within and beyond borders.

Tier3 has been monitoring information manipulation under the label of ‘Disinformation-Misinformation‘ since 2017. While disinformation is a significant aspect of information manipulation, ‘information manipulation’ places more emphasis on the behavior itself rather than the accuracy of the content. Therefore, it has been favored over the term ‘disinformation.’ The concept of manipulative behavior aligns with other threat categories as it clearly signifies an intent to engage in malicious actions with adverse consequences. Thus, it is more aptly defined as a cybersecurity threat.

The relationship between information manipulation and cybersecurity is a topic of ongoing debate. Acknowledging its existence does not imply that cybersecurity alone can solve the problem of information manipulation. However, it is argued that cybersecurity practices can create a more secure environment for information, ultimately benefiting the efforts of cybersecurity professionals. Information manipulation and related operations should be regarded as a cybersecurity threat, as these operations directly impact at least one of the three components of the information security model, particularly the integrity of information. Furthermore, information manipulation can lead to misguiding and hindering the operational response to crises by distorting the information space. Information manipulation often serves as a steppingstone or element of more complex hybrid attacks involving other cybersecurity threats, such as DDoS attacks. Information manipulation operations frequently exploit other cybersecurity principles (e.g., authenticity and accountability) and leverage various cybersecurity tactics, techniques, and procedures, justifying the need to classify information manipulation as one of the primary categories of cyber threats.

DISinformation Analysis & Risk Management Framework (DISARM)

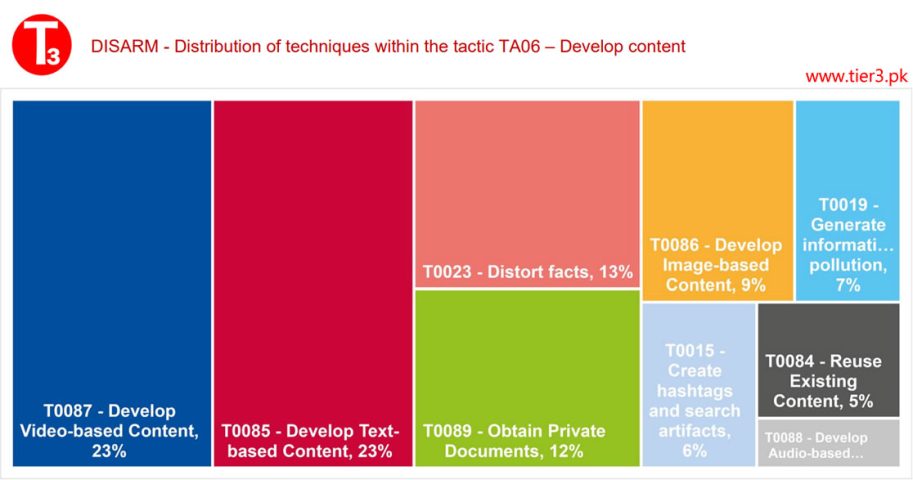

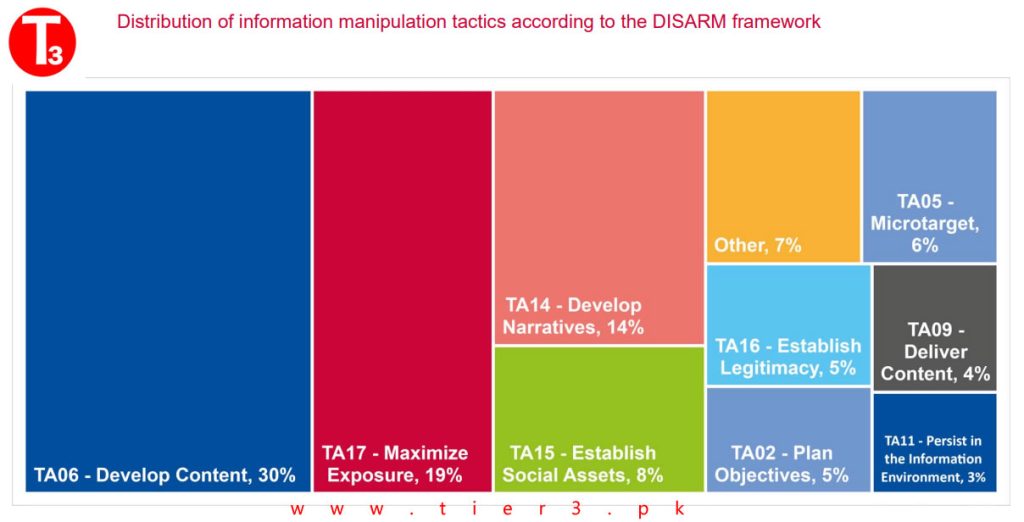

In terms of information manipulation, it is not surprising that the most commonly used tactic is content development, followed by maximizing exposure and the creation of narratives. The DISinformation Analysis & Risk Management Framework (DISARM) is a structured framework used to analyze and manage disinformation campaigns and the associated risks. It provides a structured approach to understanding, assessing, and mitigating the impact of disinformation on various aspects of society, including politics, business, and public perception. The DISARM framework typically includes several key components and stages:

- Data Collection: Gathering relevant information and data related to the disinformation campaign. This may include analyzing social media posts, news articles, websites, and other sources of information.

- Content Analysis: Examining the content of disinformation, such as false narratives, fake news, manipulated images, and misleading claims. This stage aims to identify the specific tactics and techniques used in the campaign.

- Source Identification: Determining the sources of disinformation, which may include state-sponsored actors, political groups, or individuals. Understanding the source is crucial for assessing motives and potential consequences.

- Audience Analysis: Analyzing the target audience of the disinformation campaign, including their demographics, preferences, and vulnerabilities. This helps in understanding how the campaign aims to influence or manipulate its audience.

- Narrative Assessment: Evaluating the narratives and storylines used in the disinformation campaign. This involves understanding the messaging and the emotional appeal used to spread false information.

- Tactics and Techniques: Identifying the tactics and techniques used in the disinformation campaign, such as amplification through bots, fake accounts, deep fakes, and social engineering.

- Impact Assessment: Assessing the impact of the disinformation campaign on various stakeholders, including individuals, organizations, and society at large. This includes evaluating the potential harm or consequences of the disinformation.

- Risk Analysis: Determining the level of risk associated with the disinformation campaign. This involves considering factors like the campaign’s reach, credibility, and the potential for real-world harm.

- Countermeasures and Mitigation: Developing strategies and countermeasures to mitigate the impact of disinformation. This may include public awareness campaigns, content moderation, and legal actions.

- Monitoring and Evaluation: Continuously monitoring the evolving disinformation landscape and assessing the effectiveness of countermeasures. Adjusting strategies as needed to adapt to new disinformation tactics.

The top three tactics in the DISinformation Analysis & Risk Management Framework (DISARM) are defined as follows (in order of frequency):

- TA06 – Develop Content: Create or acquire text, images, and other content.

- TA17 – Maximize Exposure: Increase the exposure of the target audience to incident or campaign content by flooding, amplifying, and cross-posting.

- TA14 – Develop Narratives: Promote beneficial master narratives to establish long-term strategic narrative dominance. These tactics center around the daily promotion and reinforcement of messaging from a misinformation campaign or cognitive security perspective, covering a wide range of activities both online and offline.

AI-enabled Manipulation of Information

AI-enabled manipulation of information continues to be a concern, with the debate surrounding the use of AI to manipulate information intensifying both within and outside the industry professional community. The emergence of easy-to-access and easy-to-use AI tools that can generate highly realistic images or authoritative-sounding text based on user prompts has fueled this discussion. The rapid, voluminous, and scalable generation and dissemination of false or misleading content using AI tools remain a significant concern. AI’s ability to provide one-to-one interactive disinformation and tailored disinformation can amplify the diffusion and effectiveness of campaigns.

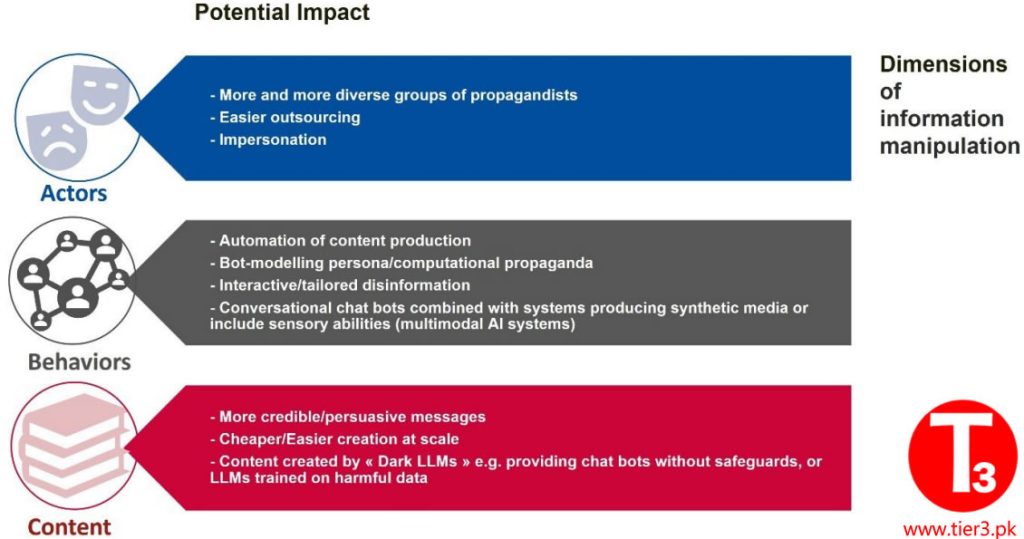

The potential impact of AI on information manipulation includes actors engaging in campaigns, their behaviors, and the content they produce.

Regarding behaviors, the analysis of incidents shows that the creation of inauthentic accounts and the use of bots to spread manipulated information are common practices. Regarding content, while information manipulation has often involved purportedly leaked documents or images, these are typically “cheap fakes” or “shallow fakes,” meaning they are miscontextualized or created with inexpensive software tools. The use of AI cannot be ruled out, but the prevalence of AI-generated websites and information sources with minimal human oversight is noteworthy. At the same time, technologies for detecting and countering the use of AI for information manipulation have been researched and developed. The competition between detection and manipulation tools in the context of AI-enabled disinformation remains an evolving challenge.